Introduction

Developers are hired to build software. But a surprising amount of their work week disappears into everything that surrounds building by context-switching between tools, triaging tickets, manually assembling release notes from scattered Jira tickets and GitHub commits. In a typical enterprise setup, a plethora of these tools exist to ensure consistency in practices and standards. However, the order of complexity increases manifold with each new tool or platform.

While engineering organizations allocate budget for these tools, nobody budgets the time and cognitive overload on developers, nor do they track the invisible cost in the sprint capacity. But every engineering leader feels it that nagging sense that the team is busy all the time yet shipping slower than the headcount would suggest.

And when leaders do try to fix it, they optimize the wrong layer. Smarter code completion. Faster IDEs. Better CI pipelines. All of it is necessary; none of it is sufficient. Because the constraint in most organizations isn’t how fast a developer can write a function. It’s how fast the system around them can move context, decisions, and artifacts from one stage of delivery to the next.

This is the invisible tax on software delivery. And it compounds with every tool you add, every team you scale, every compliance requirement you absorb.

The Missing Layer

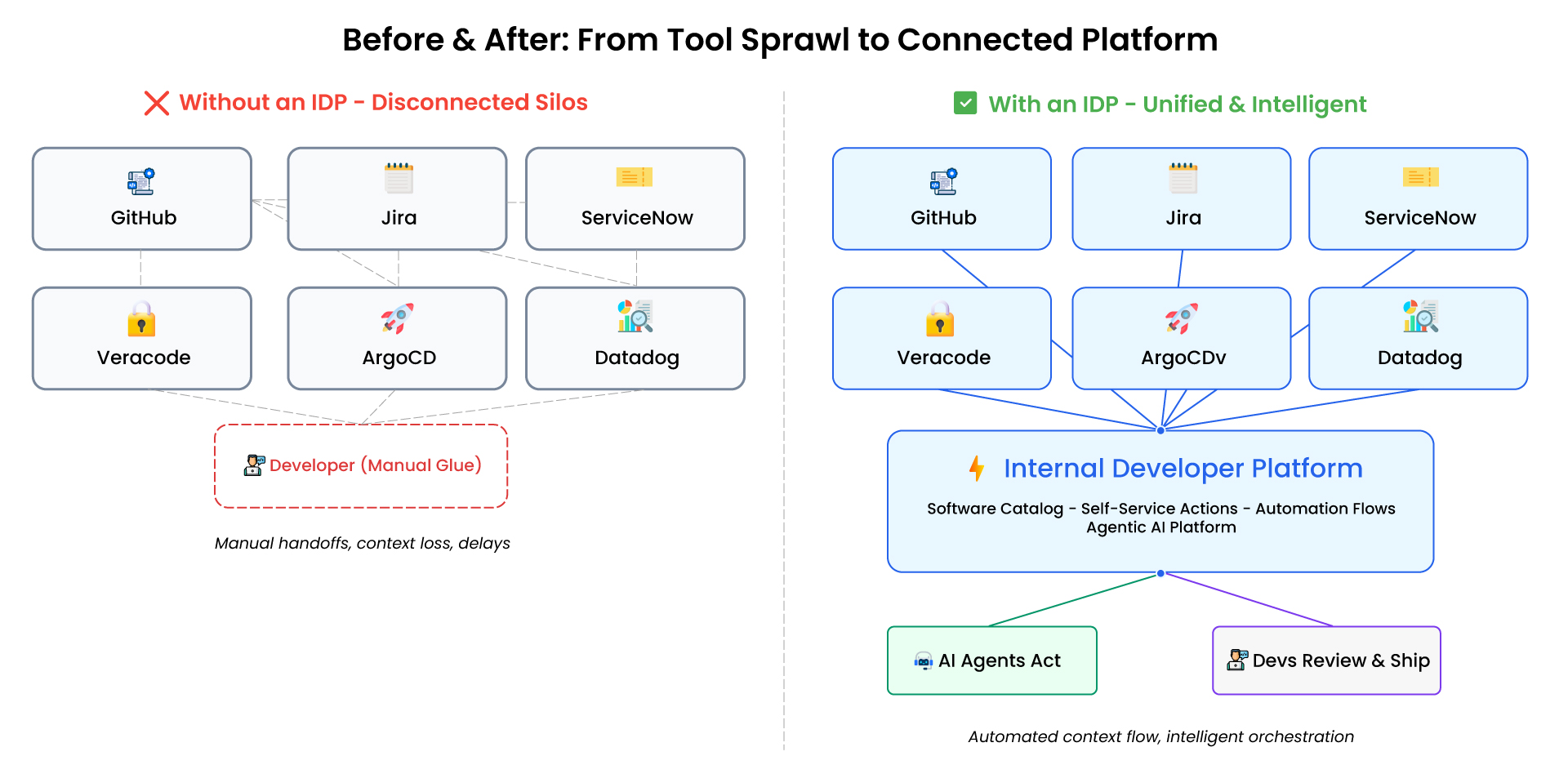

Here’s a scenario that will sound painfully familiar. A developer needs to understand the current state of the service. The code lives in GitHub. The open bugs are in Jira. The incident history sits in ServiceNow. The deployment status is in ArgoCD. The security posture is in Veracode. Monitoring is in Datadog. Access management is somewhere else entirely.

Six tools. Six logins. Six mental models and none of them talk to each other in a way that gives the developer or their manager, or the security team, or the product owner a coherent picture of reality.

Imagine onboarding a new developer into this environment. They don’t just need to learn about the codebase; they need to learn six tools, the unofficial relationships between them, and the tribal knowledge about which system is the source of truth for what. IDPs compress that ramp-up by giving every developer, new or tenured, a single coherent model of reality.

This isn’t a tooling failure. Each of these platforms is excellent at what it does. The failure is architectural. There’s no connective layer, no single place where a service, its code, its tickets, its vulnerabilities, its deployments, and its incidents exist as a connected entity rather than isolated records in isolated systems.

The consequences cascade quietly. Security scans produce vulnerability reports that nobody checks daily. Someone eventually triages them into tickets, manually. Those tickets get assigned, but the developer receiving them has to reverse-engineer context from a vulnerability ID and a file path. Compliance teams in ServiceNow need visibility into remediation progress, so someone manually mirrors the Jira status. Release time arrives, and someone spends hours cross-referencing commits against tickets to assemble notes that are inevitably incomplete.

Every one of these gaps is a handoff. Every handoff is delayed. Every delay is an opportunity for context to degrade, priorities to drift, for work to fall through cracks.

Organizations don’t need another tool. They need a layer that connects the tools they already have.

The Connecting Layer

Internal Developer Platforms (IDPs) have become one of the most discussed concepts in platform engineering, and for good reason. It’s no coincidence that Gartner projects 80% of orgs will have platform teams by 2026. But the way IDPs are typically described as “a portal for developers,” “a self-service layer,” “a golden path to production”, undersells what they actually solve.

IDP isn’t a dashboard. It’s an abstraction layer.

Think about what made cloud platforms transformative. It wasn’t that they gave you virtual machines. It’s that they abstracted away the physical infrastructure so teams could focus on what they were building rather than where it was running. IDPs do the same thing for the delivery lifecycle. They abstract away the operational complexity, the six tools, the manual handoffs, the tribal knowledge about which system holds which truth, and present a unified model of your software and its entire lifecycle.

At their best, IDPs provide three things that no individual tool in your stack can. First, a software catalog, a living map of every service, environment, resource, data contract, and artifact in your organization, with relationships between them. Not a static wiki. A dynamic, API-driven model that reflects reality in real time. Instead of reconstructing context across six tabs, the developer sees one view of their service: its open vulnerabilities, recent deployments, pending tickets, and incident history, all connected. Second, self-service actions, the ability for developers to trigger complex operations (deploy a service, provision an environment, escalate an incident) from a single interface, backed by automation that orchestrates across the underlying tools. Third, workflow automation, event-driven pipelines that respond to changes in your catalog and execute multi-step processes without human intervention.

When these three capabilities converge, something fundamental shifts. The delivery lifecycle stops being a series of disconnected steps performed by humans navigating between tools, and starts becoming a connected system where context flows automatically and operations trigger based on events rather than memory.

What the Connecting Layer Needs

The IDP landscape is maturing fast, with strong options emerging across the market. When we evaluated platforms for our client engagements, three capabilities separated the solutions that looked good in demos from the ones that held up in production. These aren’t tied to any single vendor — they’re architectural qualities worth evaluating regardless of which platform you choose.

A data model that maps to reality

Most IDPs center their catalog around services and deployments. That’s a start, but it’s not enough. The platform you choose should let you define any entity type – services, cloud resources, Jira tickets, ServiceNow incidents, GitHub repositories, Veracode findings, releases and create typed relationships between them. This means your catalog doesn’t just know that Service A exists. It knows that Service A has 12 open vulnerabilities, three unresolved incidents, was last deployed on Tuesday, and has a pending release with 47 associated Jira tickets. That relational richness is what turns a catalog from a directory into an operational nervous system. Without it, you’ve built a prettier dashboard, not a connective layer.

Self-service actions that go beyond templates

The platform’s self-service framework should let you define custom operations that developers and teams trigger directly from the portal, backed by automation workflows that can orchestrate across any system with an API. This isn’t “click a button to create a Kubernetes namespace.” It’s “click a button to trigger a multi-step pipeline that creates tickets, invokes agents, updates compliance records, and posts results back to the catalog.” The action surface should be as broad as your automation layer. If the IDP constrains you to predefined patterns, you’ll outgrow it the moment your workflows get interesting.

An agentic layer that treats AI as a first-class participant

This is the capability that moves IDP from “useful portal” to “genuinely different.” The platform should allow AI agents to be embedded directly into automation workflows, not as a bolt-on integration, but as native actors that can receive context from the catalog, reason about it, take actions, and feed results back. Critically, these agents need to operate within the same governance and observability framework as any other automation. If your AI agents aren’t auditable, controllable, and integrated into the same pipelines that handle everything else, you haven’t built intelligent automation, you’ve built a shadow IT problem with better marketing.

The Reasoning Layer

In our client work, that third capability changed the game for us: automation workflows that don’t just move data between systems but actually reason about it and produce meaningful output.

With this foundation in place, we set out to tackle two of the most persistent coordination problems in software delivery, problems that every engineering team recognizes, but few have successfully automated. The first is the gap between detection and remediation.

Vulnerabilities That Sit and Age

Every mature engineering organization runs security scans. The detection side of the equation is largely solved; tools like Veracode, Snyk, and SonarQube can identify vulnerabilities with impressive accuracy and granularity. What’s not solved is what happens next.

In most organizations, the post-detection workflow looks something like this: the security team reviews the scan results, manually creates Jira tickets for vulnerabilities that warrant attention, cross-references with ServiceNow for compliance tracking, assigns tickets to the appropriate engineering teams, and then… waits. Developers eventually pick up the tickets, days or weeks later, depending on sprint priorities. When they do, they spend significant time rebuilding context: what’s the vulnerability, where’s the affected code, what’s the recommended fix, what dependencies might break.

The cost isn’t just the developer’s hours. It’s the exposure window. Every day a known vulnerability sits in a backlog is a day it could be exploited.

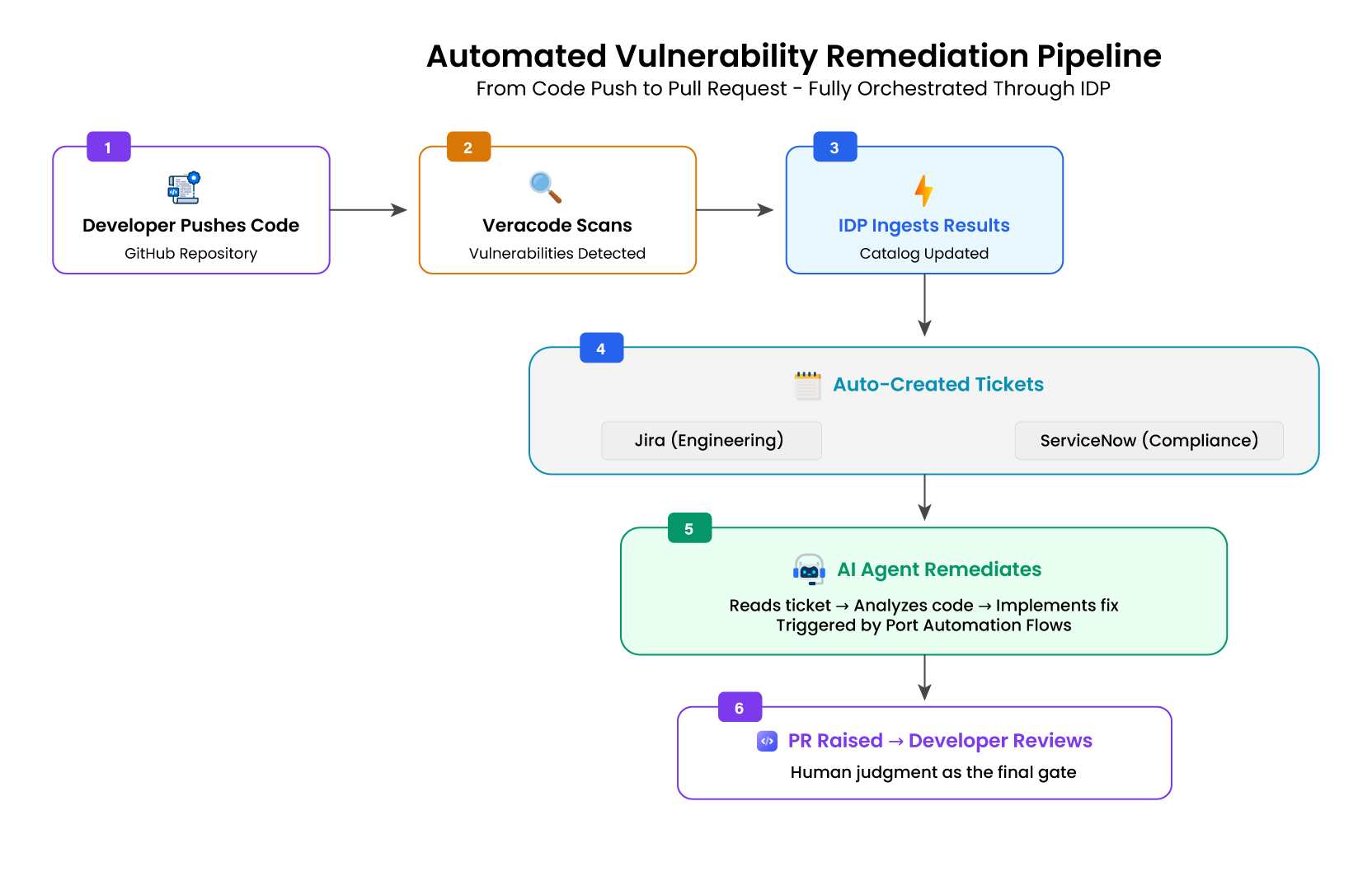

We built a pipeline that compresses this entire cycle, from code push to remediation Pull Request into an automated flow.

The Flow

A developer pushes the code. Veracode scans it and produces a vulnerability report. The platform ingests the scan results and, for each identified vulnerability, automatically creates structured tickets in both Jira and ServiceNow — enriched with the vulnerability type, severity, CWE classification, affected file paths, and remediation guidance. Jira serves as an engineering workflow. ServiceNow serves compliance and incident tracking. Both are created simultaneously, linked through the IDP’s catalog, eliminating the manual triage step entirely.

Here’s where the agentic capabilities transform the process. Once tickets exist, the IDP’s automation flows trigger AI agents designed for vulnerability remediation. The agent reads the Jira ticket, the full vulnerability description, the classification, and the affected code location. It analyzes the codebase context: what the vulnerable code does, how it’s called, and what dependencies surround it. Based on this analysis, the agent defines a remediation approach, implements the fix, and raises a pull request in GitHub with a clear description of what changed and why, referencing the original ticket and Veracode finding.

Release Notes That Nobody Wants to Write

Release notes sound trivial until you’ve tried to produce accurate ones for a complex product with multiple contributing teams, dozens of repositories, and hundreds of tickets per release cycle.

The challenge isn’t writing prose. It’s an assembling context. Which Jira tickets are included in this release? Which GitHub commits correspond to those tickets? What has changed since the last release? Are any of these changes related to work from previous releases, a bug fix that addresses something partially resolved two versions ago, a feature that builds foundational work from last quarter?

Answering these questions manually requires someone with deep institutional knowledge navigating between Jira and GitHub, relying on memory as much as metadata. This person becomes a bottleneck. The notes they produce are only as complete as they recall.

We built a pipeline that eliminates the assembly problem entirely.

The Flow

The platform integrates Jira and GitHub into a unified model where every release, ticket, commit, and pull request exists as a connected entity. When a release is tagged in GitHub, the IDP’s automation flows trigger the release notes generation pipeline.

The agentic platform processes the release by pulling every associated ticket and commit. But it doesn’t produce a flat list. It segments changes by category, features, bug fixes, performance improvements, breaking changes, dependency updates, based on ticket metadata and commit patterns.

The piece that surprised stakeholders most was change lineage. But the real value wasn’t categorization. The agent compares the current release against previous releases and surfaces relationships. If a bug fix in version 3.2 addresses an issue partially mitigated in 3.1, that connection is shown. If a feature builds on foundational work from two releases ago, that context is included. Release notes transform from a flat changelog into a narrative of how the product is evolving, not just what changed, but how changes relate to each other across versions.

The output is automatically generated, categorized, and contextualized, and produced at the moment a release is pushed. The team reviews and refines rather than builds from scratch.

Conclusion

If your engineering organization feels busy but slow, if your developers are productive in isolation, but the system around them can’t keep pace, then the problem probably isn’t your people or your tools. It’s the space between your tools. The handoffs. The manual bridges. The context that evaporates every time work moves from one system to another.

That’s where Internal Developer Platforms earn their value. Not as another dashboard, but as the layer that finally connects what’s been disconnected.

We didn’t begin with a grand vision of autonomous delivery pipelines. We began with specific pain: vulnerability tickets aging in backlogs and release notes that took hours to assemble. The IDP gave us a platform to connect the tools. The agentic layer gave us the intelligence to act on those connections. The specific platform matters less than the architectural pattern: a unified catalog that connects your toolchain, self-service actions that orchestrate across it, and AI agents that reason within it.

The most effective automation doesn’t replace humans. It removes the work between the work, the triage, the cross-referencing, and the manual bridging of systems that was never human work to begin with. What remains is the judgment, the review, the architectural thinking that developers are trained for but rarely have time to do.

The invisible tax is real. But it’s not inevitable. The organizations that recognize it and build the connective layer to eliminate it, will ship faster, ship safer, and finally let their engineers do the work that actually matters.