Senior leaders who have not coded in the last 10 years are now able to convert their vision into working prototypes over a weekend. These prototypes are created for solving real problems or for envisioning the ideas with working applications. This is changing the perspective of the pace at which software is getting created and the pace at which it CAN be created.

The questions being asked are: “So if I can do this in a day, why is my team telling me their version takes six months?”

I have had some version of that conversation multiple times in the last eighteen months. The leader is sometimes a CEO, sometimes a VP, once a CTO. The thing they built is roughly the same shape every time. The pitch that follows is the same too — could we cut the project budget, could we move the deadline up, why are engineers acting like this is hard. And the misunderstanding underneath the question is the same.

It’s worth writing about, because once a leader has had this experience, you don’t argue them out of it — and you shouldn’t try. The speed they saw was real. What’s worth doing is helping them see what the experience left out.

Running is the easy part

Here’s what was true about the prototypes being created. The code works. The screens render. The buttons do things. These prototypes are, by the local definition of the word, software.

Here’s what was also true and was not visible from the outside. The whole thing usually runs on user’s laptop. There was no auth beyond a hardcoded check, no audit log, no rate limiting, no database connection pooling, no error handling for the shape of the data real users would send, no plan for what happens when two people open the same record, no way to roll a change back, no way to know if it had silently corrupted itself.

None of these steps are skipped on purpose. These are not skipped at all in their head. They weren’t thought about, because the agent didn’t surface them, and the demo never reached a point where their absence would have shown.

This is the line Charity Majors keeps making that almost nobody outside engineering ever hears:

Writing code is the easiest part of software engineering, and it’s getting easier by the day. The hard parts are what you do with that code—operating it, understanding it, extending it, and governing it over its entire lifecycle.

The leaders see the easy part working and take that as evidence the rest was easy too. There is no demo you can stage that surfaces the hard part. The hard part doesn’t exist on a single machine on a Saturday afternoon. It only exists in production, over time, under load, with users you don’t control. None of this is to say the prototype was worthless. The compression is genuine. Getting from idea to something running has gotten dramatically faster, and that shift is permanent and worth taking seriously. The mistake is assuming the same compression carries through to the part the prototype never had to handle.

The look of slop

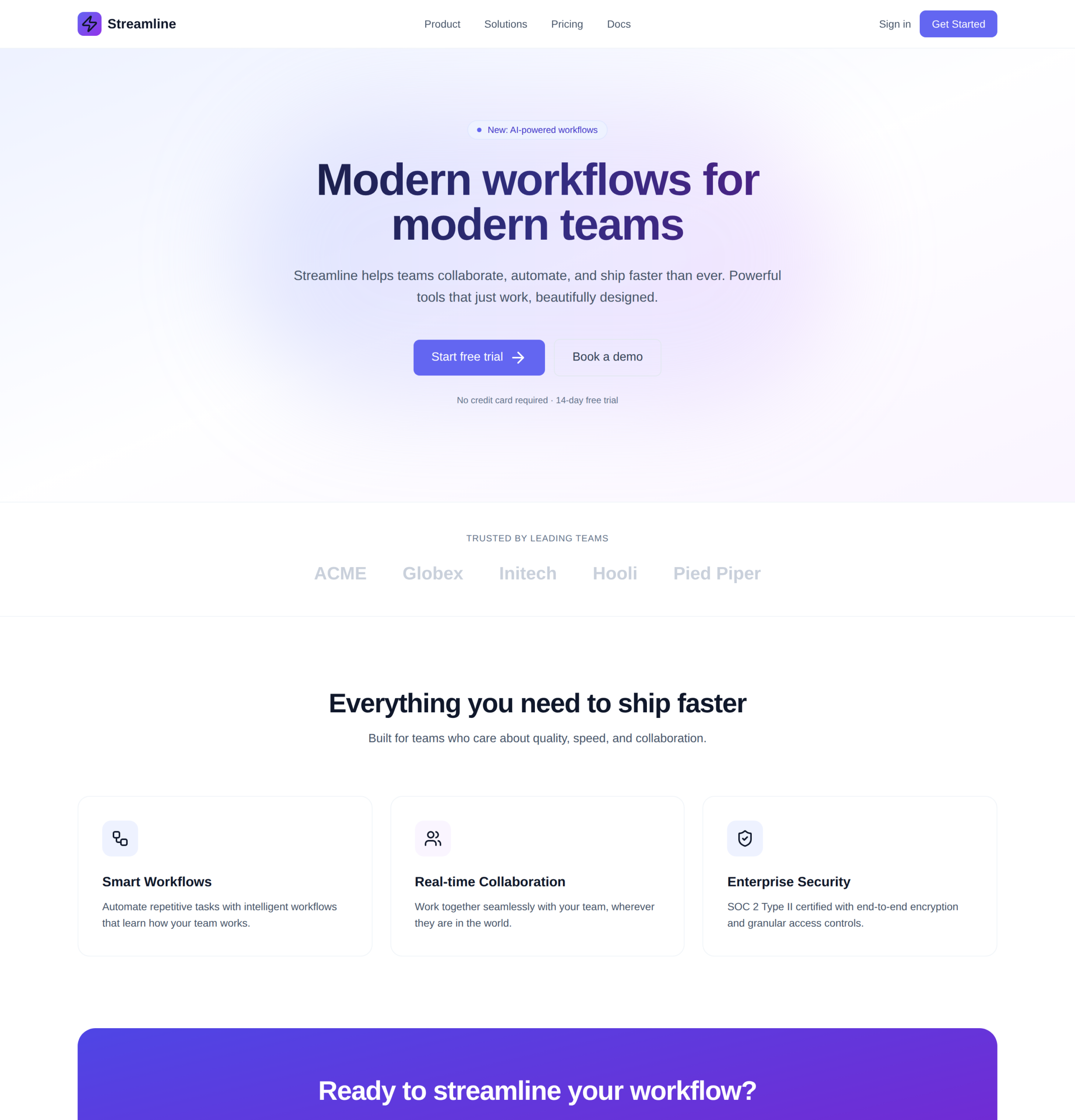

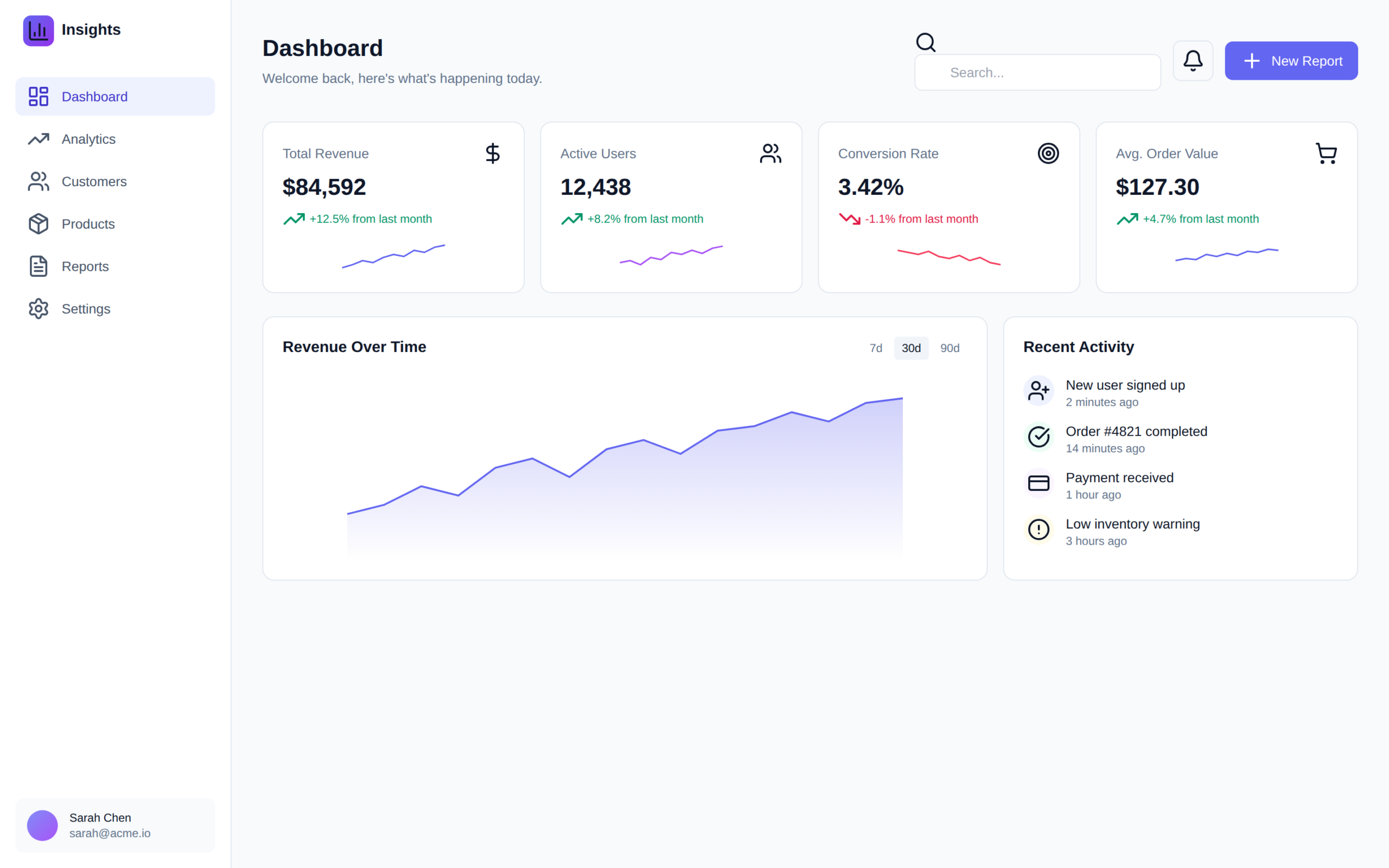

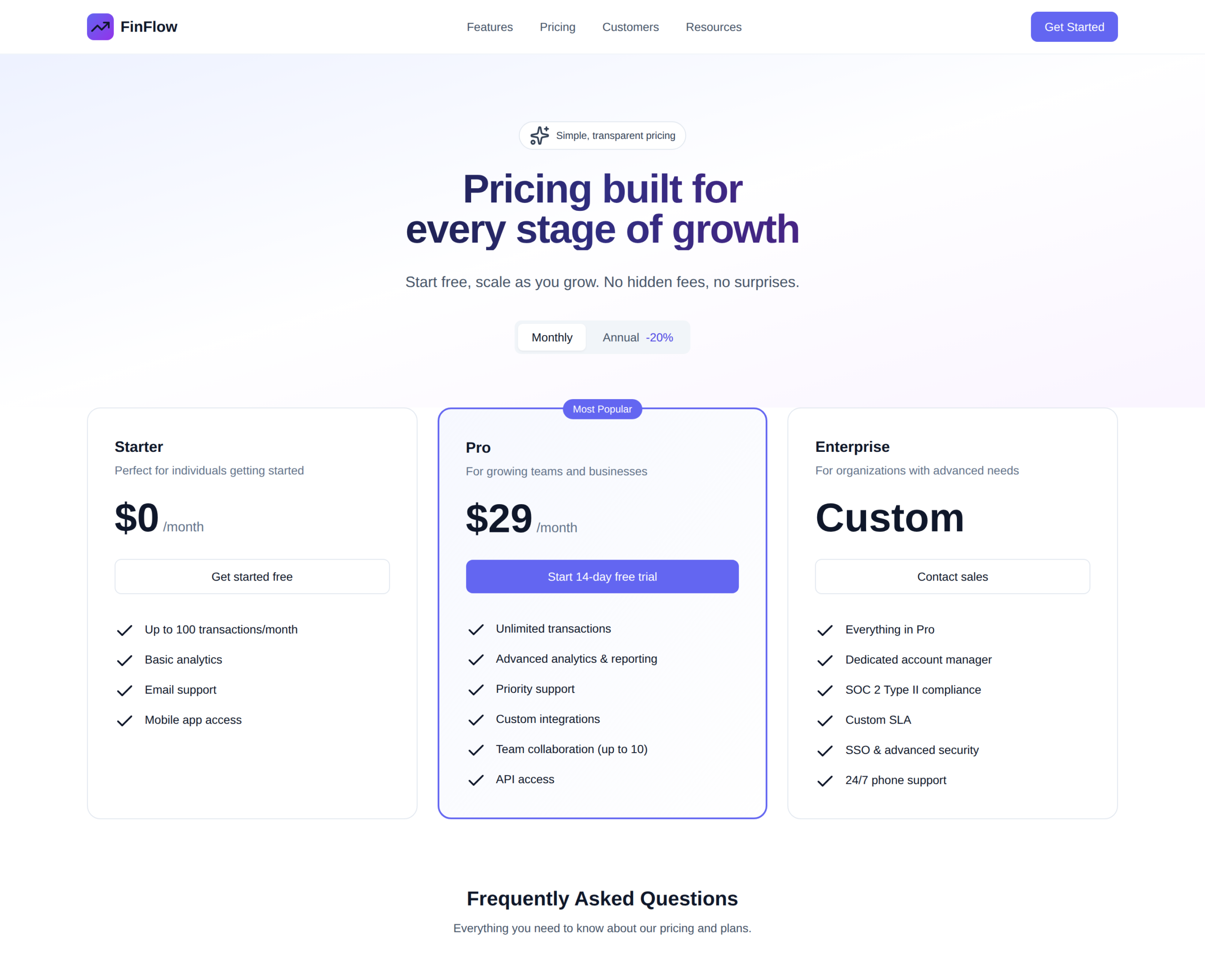

Let me make this less abstract. I asked Claude to build me three things, with the kind of prompts a leader would actually give — no design system, no brand, no constraints. Build me a landing page for a SaaS startup. Design a dashboard for an analytics tool. Make a pricing page for a fintech app.

What came back was three different products, but it was also three of the same product.

The indigo button. The same indigo button highlighted on the middle pricing tier. The lucide icons in rounded-square containers. The white-to-purple gradient hero. The three-feature grid. The KPI cards with sparklines. The “Welcome back, here’s what’s happening today” subtitle. The “Most Popular” badge. None of this was specified in any of the prompts. All of it came out anyway.

Adam Wathan, who created Tailwind CSS, posted something on X last August that I keep coming back to:

I’d like to formally apologize for making every button in Tailwind UI bg-indigo-500 five years ago, leading to every AI generated UI on earth also being indigo

It’s a joke. It’s also a perfect mechanism. A 2020 styling default became the most copied button in tutorials, blog posts, GitHub. That public corpus became training data. The model learned that the way a button looks is bg-indigo-500 , and now generates that with the gravity of a probability distribution.

Anthropic, who make Claude, ship a publicly-available system prompt fragment for frontend work that opens with this admission:

You tend to converge toward generic, “on distribution” outputs. In frontend design, this creates what users call the “AI slop” aesthetic.

The model’s manufacturer is telling you that, by default, the model gives you the same thing it gave the last person, and they have to actively prompt it not to. They literally use the word slop.

Which is also why the same tools, in the hands of someone who knows what to ask for, produce work that’s genuinely good. The default isn’t the ceiling. The default is what you get when nobody insists on more

What the indigo button is a tell of

If the sameness only showed up in the visual layer, this would just be a design problem. But the gravity well that produces the indigo button also produces the way the agent handles errors, the way it structures the database, the way it decides what state lives on the server versus the client, the auth pattern, the directory layout, the test framework, the logging.

You can’t see those choices in a prototype. You can see the indigo button. The button is a tell. It’s the same observation a designer would make standing in a stranger’s living room: when every visible decision is the default catalog choice, the invisible ones are too.

Simon Willison made a point about this last March that I think about whenever someone tells me their AI prototype is basically done:

The real risk from using LLMs for code is that they’ll make mistakes that aren’t instantly caught by the language compiler or interpreter.

The mistakes that crash are the cheap ones. They show up immediately, the agent gets a chance to fix them, you never see them. The mistakes that survive are the ones that look fine. An off-by-one in a date filter. A regex that almost matches. A retry loop with no backoff. An auth check that depends on a header the load balancer strips. None of those crash. None of them would have shown up on a Saturday afternoon. All of them produce the kind of email that arrives at 3am six months in

What I’d ask, and what I wouldn’t

I do not have a tidy answer to this, and I am suspicious of anyone who claims to. I don’t think the right response is telling leaders to put the tools down. They are useful. They are getting more useful. The leaders who play with them tend to be the ones I most want building AI strategy with, because at least they have hands-on intuition about what the agent is actually good at.

What I’ve come to believe is that the speed they experienced is real, and the conclusion they drew from it is wrong, and the gap between those two things doesn’t close from the demo side. You cannot stage a demo that contains the part the demo is missing. You can only earn the leader’s patience by being honest about what you’re not showing them, before they decide you’re padding the estimate.

If there’s anything I’d ask of leaders who have had a great Saturday afternoon with an agent: hold the speed as a real signal, and hold the suspicion that something is being hidden from you as a real signal too. Both are true. The first one is easier to feel. The second one is the one that keeps your project alive.

That second signal — the one that’s harder to feel — is the kind of work we do at Sage IT. Taking the prototype that excited the room and turning it into the system that holds up under real use. If that’s the gap you’re staring at, we’re a good conversation to have.